VR Cognitive Impairment Tests

A Virtual Reality application for detecting signs of mild cognitive impairment and Alzheimer's disease through immersive, automated testing.

The Problem

Traditional cognitive impairment tests like the Prospective Memory Test (PMT) require trained proctors to administer and monitor patients throughout the entire examination. This creates significant resource constraints for healthcare facilities.

How can we automate cognitive testing while maintaining accuracy and creating a more immersive, realistic testing environment than 2D computer screens?

Target Audience

- Medical Facilities: Hospitals and clinics needing efficient cognitive screening

- Patients: Individuals being tested for mild cognitive impairment and Alzheimer's

- Healthcare Providers: Doctors and researchers analyzing cognitive health

- Research Institutions: Organizations studying VR's effectiveness in healthcare

Project Overview

This VR application digitizes the Prospective Memory Test (PMT), developed by Loewenstein and Acevedo in 2001 and revised in 2015. The test evaluates whether users show signs of mild cognitive impairment and Alzheimer's disease through specific task-based assessments.

The application includes two primary tests: the Money Test (giving specific amounts of currency to a virtual proctor) and the Pill Test (organizing medication in a pillbox based on a weekend schedule). Users are fully immersed in a realistic office environment where they can physically interact with objects.

By bringing this test into VR, we eliminate the need for constant proctor supervision while providing a more realistic testing environment than traditional 2D computer screens.

Team & Collaboration

Working with medical professionals and researchers

VR Experience Demo

See the tests in action

The Two Tests

Based on the revised Prospective Memory Test (PMT)

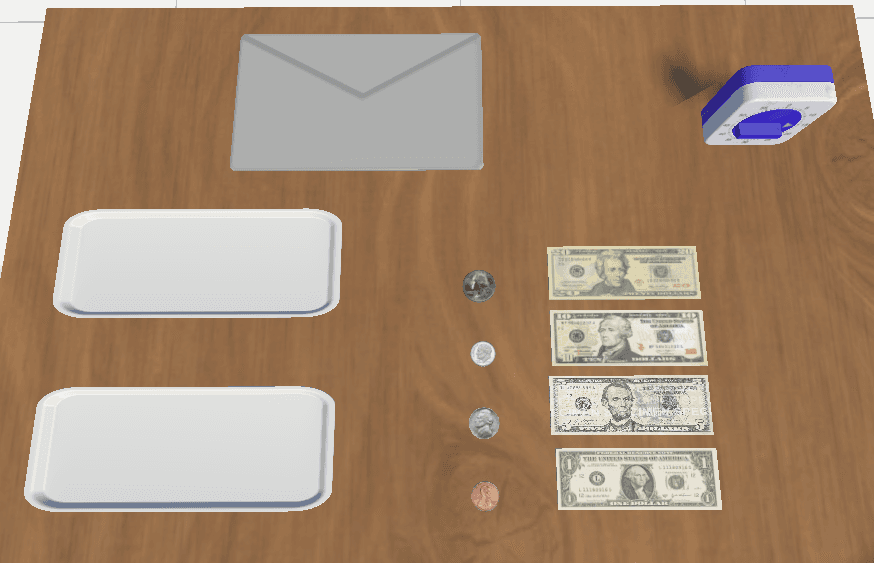

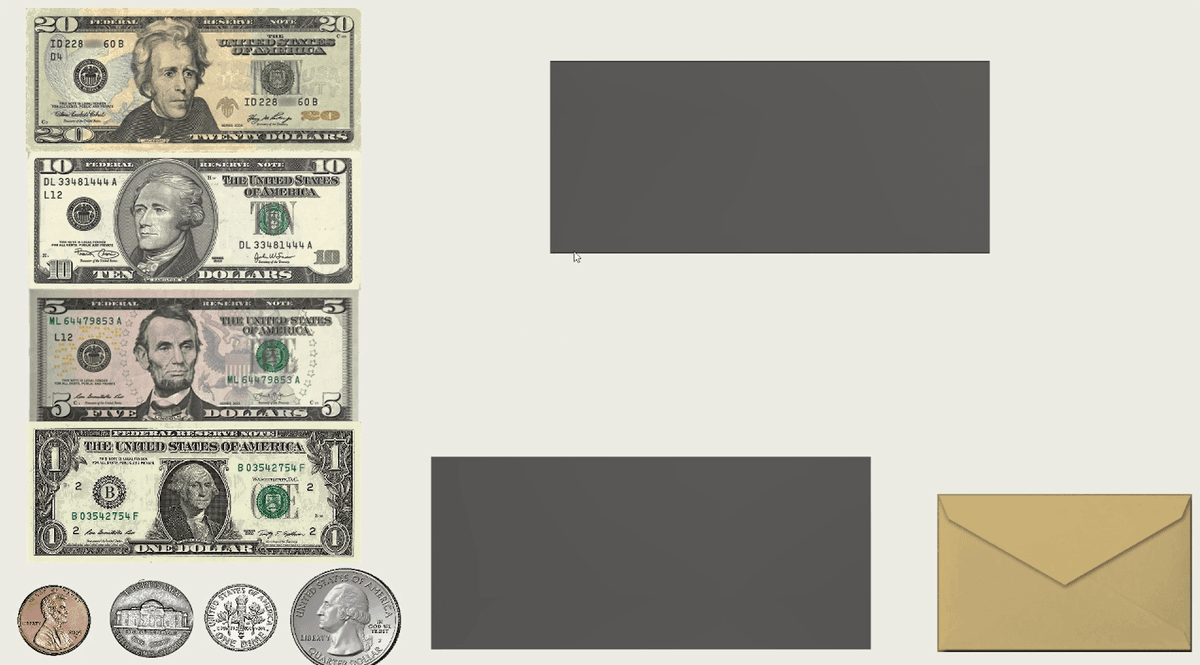

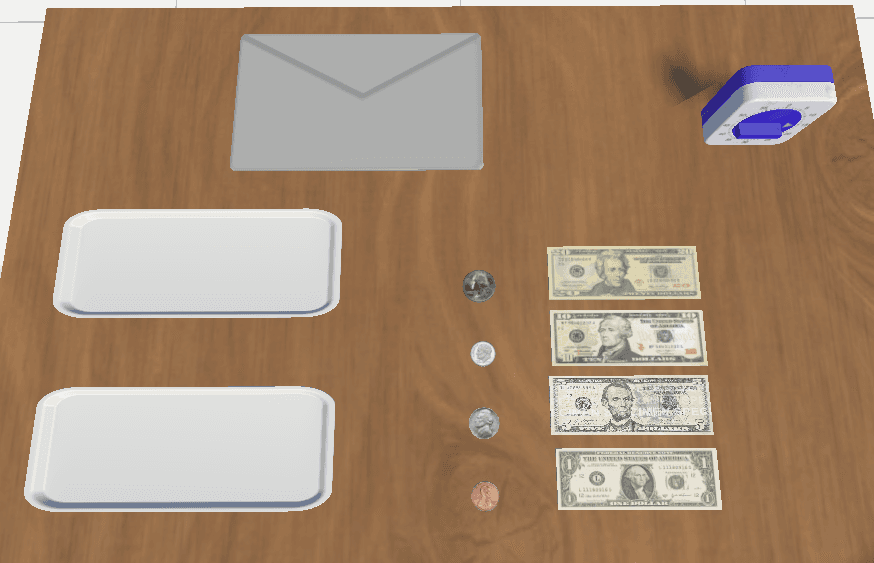

Test A: Money Test

The user opens an envelope containing dollar bills and coins. They must give the virtual proctor $10.25 and give themselves $5.50.

The system tracks which bills are picked up, who receives them, and how long each action takes. Scoring is based on accuracy and the number of reminders needed.

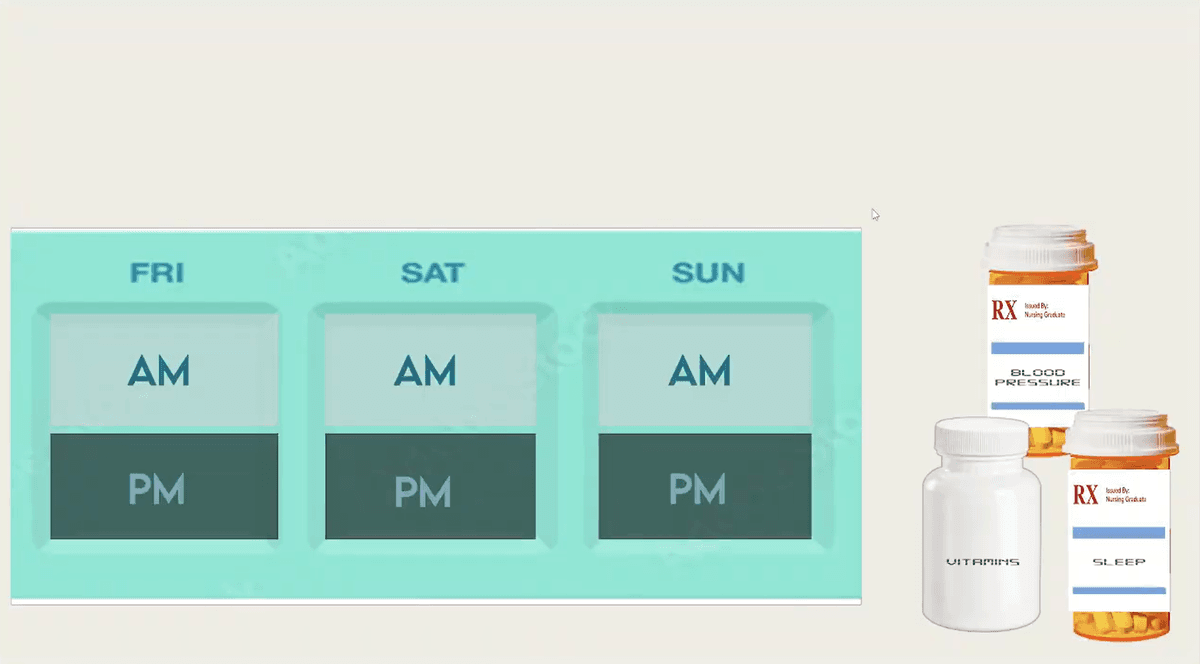

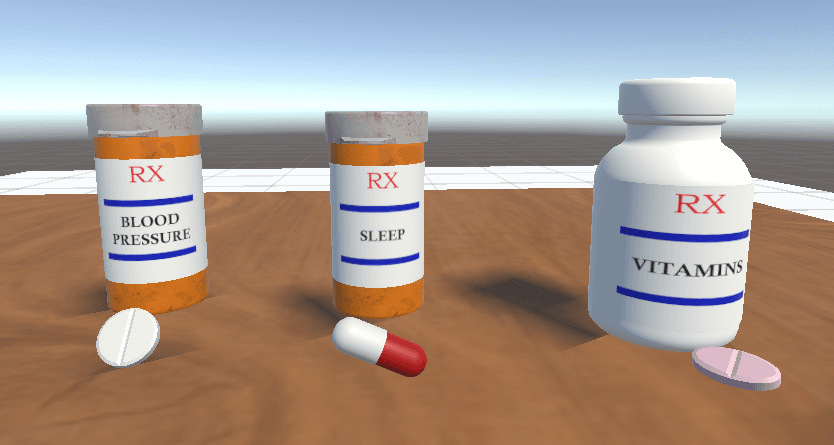

Test B: Pill Test

The user imagines a weekend trip and must organize pills (multivitamin, blood pressure medication, sleeping pill) into a pillbox based on a schedule.

Schedule: Daily multivitamin with breakfast, blood pressure medication morning and evening, sleeping pill before bed. Trip spans Friday evening through Sunday evening.

Development Process

From 2D to immersive 3D VR

Foundation Analysis

Analyzed the existing 2D Unity version created by Katarzyna Paternak, along with medical documentation from Dr. Curiel's team. Studied the PMT protocol, scoring criteria (Intention to Perform, Accuracy of Response, Need of Reminders), and testing procedures to ensure medical accuracy.

3D Asset Creation

Created and sourced all necessary 3D models using multiple tools and repositories:

- Blender: Custom modeling for dollar bills, coins, pill bottles, and pillbox

- Unity Asset Store: Office furniture, table, clock, and environmental props

- Sketchfab: Pill bottles and supplementary objects

VR Implementation

Built the application in Unity using the XR Interaction Toolkit for Meta Quest 3 integration. Developed C# scripts for object interaction, scoring logic, and test flow.

Created a tutorial scene to familiarize users with VR controls (grabbing objects, moving items) before beginning the actual cognitive tests.

AI Integration

Integrated OpenAI's Whisper API for speech-to-text transcription and ChatGPT API for evaluating user responses when asked to repeat instructions. This eliminates the need for human evaluation of verbal recall accuracy.

Technical Deliverables

Key features and systems developed

VR Interaction System

XR Grab Interactable components with physics-based object handling. Rigidbody and collider systems for realistic object manipulation and collision detection.

Scoring Engine

Automated scoring based on three criteria: Intention to Perform, Accuracy of Response, and Need of Reminders. Tracks timing, object selection, and placement accuracy.

SpawnOnGrab Scripts

Dynamic object spawning system that triggers when users interact with specific items (e.g., opening envelope spawns money on table).

Demonstration System

Smooth animation system showing correct object movement paths to demonstrate proper test procedures before user attempts.

AI Voice Processing

Whisper API for speech-to-text transcription and ChatGPT API for evaluating verbal response accuracy against test instructions.

Collision Detection

OnTriggerEnter/OnCollisionEnter methods tracking correct pill and money placement in designated zones for automated scoring.

Visual Design Elements

3D models and environments

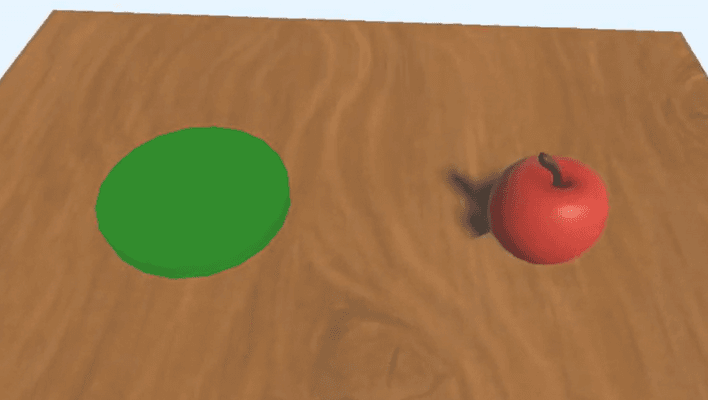

Tutorial Scene

Users learn VR controls by picking up an apple and placing it on a green plate

Money Scene

Realistic dollar bills, coins, envelope, and trays on a wooden table

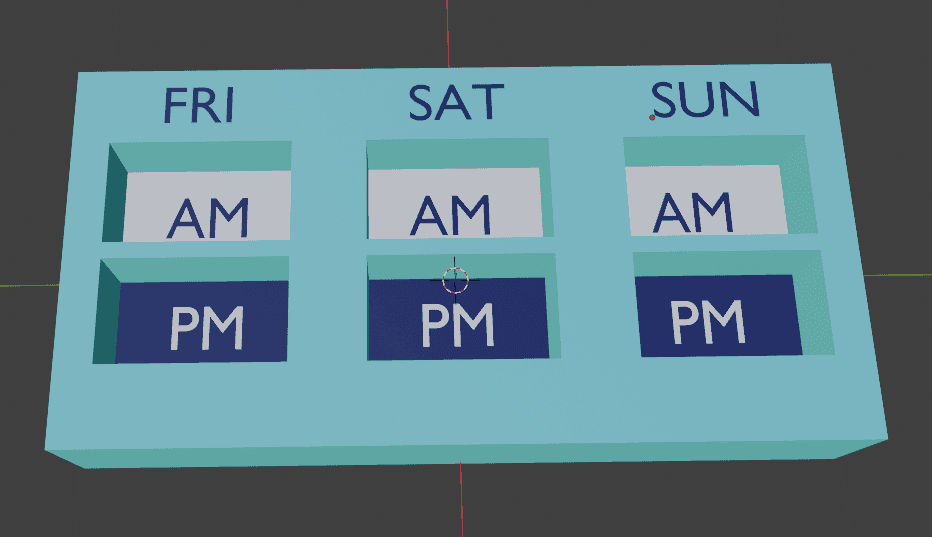

Pill Box Design

Custom Blender model with FRI/SAT/SUN days and AM/PM compartments

Pills Scene

Pill bottles with labels and pills sourced from Sketchfab, arranged on a table for the pill test

Challenges & Solutions

Technical and design obstacles overcome

VR Complexity

Challenge: VR adds exponentially more possibilities than 2D—users can throw objects, walk outside test areas, and encounter physics glitches.

Solution: Implemented boundary constraints, object respawn systems, and robust physics handling to manage unexpected user behavior while maintaining test validity.

OpenAI Integration

Challenge: Implementing OpenAI APIs (Whisper, ChatGPT) on Meta Quest 3 headset faced permission issues, internet access limitations, and API key privacy concerns.

Solution: Created a separate demo showcasing API functionality. Continuing to work on secure implementation for headset deployment.

Performance Optimization

Challenge: High-quality 3D office models (Professor Visser's and Dr. Lisetti's offices) caused significant frame rate drops on the Quest 3.

Solution: Used generic office assets from Unity Asset Store instead. Future iterations will optimize high-quality models through poly reduction and LOD systems.

Methods & Technology Stack

Development

- • Unity Game Engine

- • C# Programming

- • VS Code IDE

- • XR Interaction Toolkit

3D Assets

- • Blender 3D

- • Unity Asset Store

- • Sketchfab

- • Custom modeling

AI & Audio

- • OpenAI Whisper API

- • ChatGPT API

- • NaturalReader TTS

- • Voice Recognition

Hardware

- • Meta Quest 3

- • VR Controllers

- • 6-DOF Tracking

- • Hand Tracking

Key Learnings

What this project taught me

VR Development Expertise

Mastered Unity's XR Interaction Toolkit, physics-based VR interactions, and Meta Quest 3 development. Learned to design for 3D space constraints and handle unexpected user behaviors that don't exist in 2D applications.

Healthcare Technology

Gained insight into medical testing protocols, cognitive assessment methodologies, and the importance of precision in healthcare applications. Learned to balance user experience with strict medical requirements.

AI Integration

Explored OpenAI API integration for speech recognition and natural language evaluation. Discovered the challenges and opportunities in combining AI with VR for automated assessment systems.

3D Modeling Skills

Developed proficiency in Blender for creating custom 3D assets. Learned to optimize models for VR performance and create realistic textures for medical-grade accuracy in object representation.

Impact & Reflection

This project represents the intersection of healthcare innovation and immersive technology. By automating cognitive testing while increasing immersion, we're making early detection of cognitive impairment more accessible and scalable for medical facilities.

The shift from 2D to VR creates a fundamentally more realistic testing environment—users physically manipulate objects in 3D space rather than clicking and dragging on a screen. This closer simulation of real-world tasks may yield more accurate cognitive assessments.

Beyond technical achievement, this project demonstrates VR's potential to transform medical diagnostics, making sophisticated testing procedures more efficient and accessible.

Future Development

Additional Tests

Expand the application to include NRAT and LASSI exams—additional cognitive assessments that follow similar protocols to the PMT, creating a comprehensive VR testing suite.

Environment Optimization

Optimize high-quality office models (Professor Visser's and Dr. Lisetti's offices) through polygon reduction and LOD systems to maintain visual fidelity while achieving target frame rates.

AI Deployment

Resolve OpenAI API integration challenges on Meta Quest 3 to enable fully automated voice-based instruction recall and evaluation without external processing.

Research Implications

This project aims to contribute research on how full immersion in VR environments affects cognitive task performance and memory retention compared to traditional 2D computer-based testing. The findings could inform future development of VR-based medical assessments across multiple healthcare domains.